Case Study

StageFlow

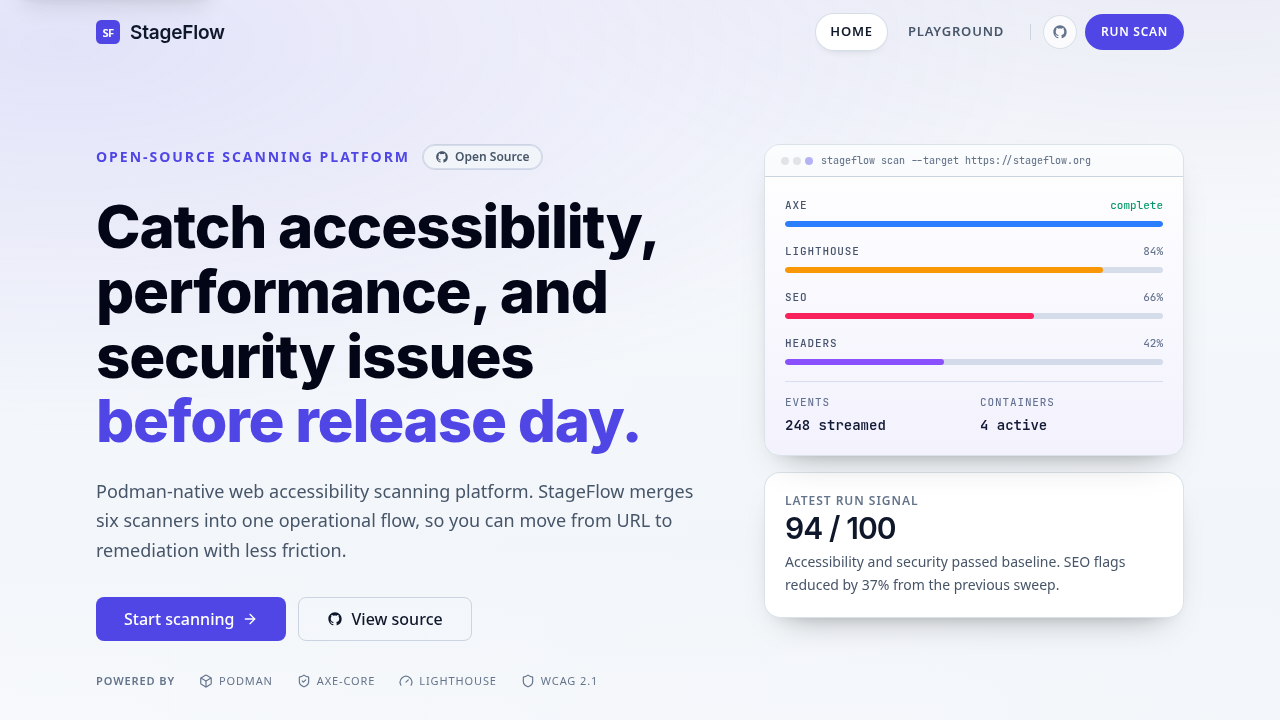

Self-hosted website scan workbench with live execution visibility and evidence-rich unified reports.

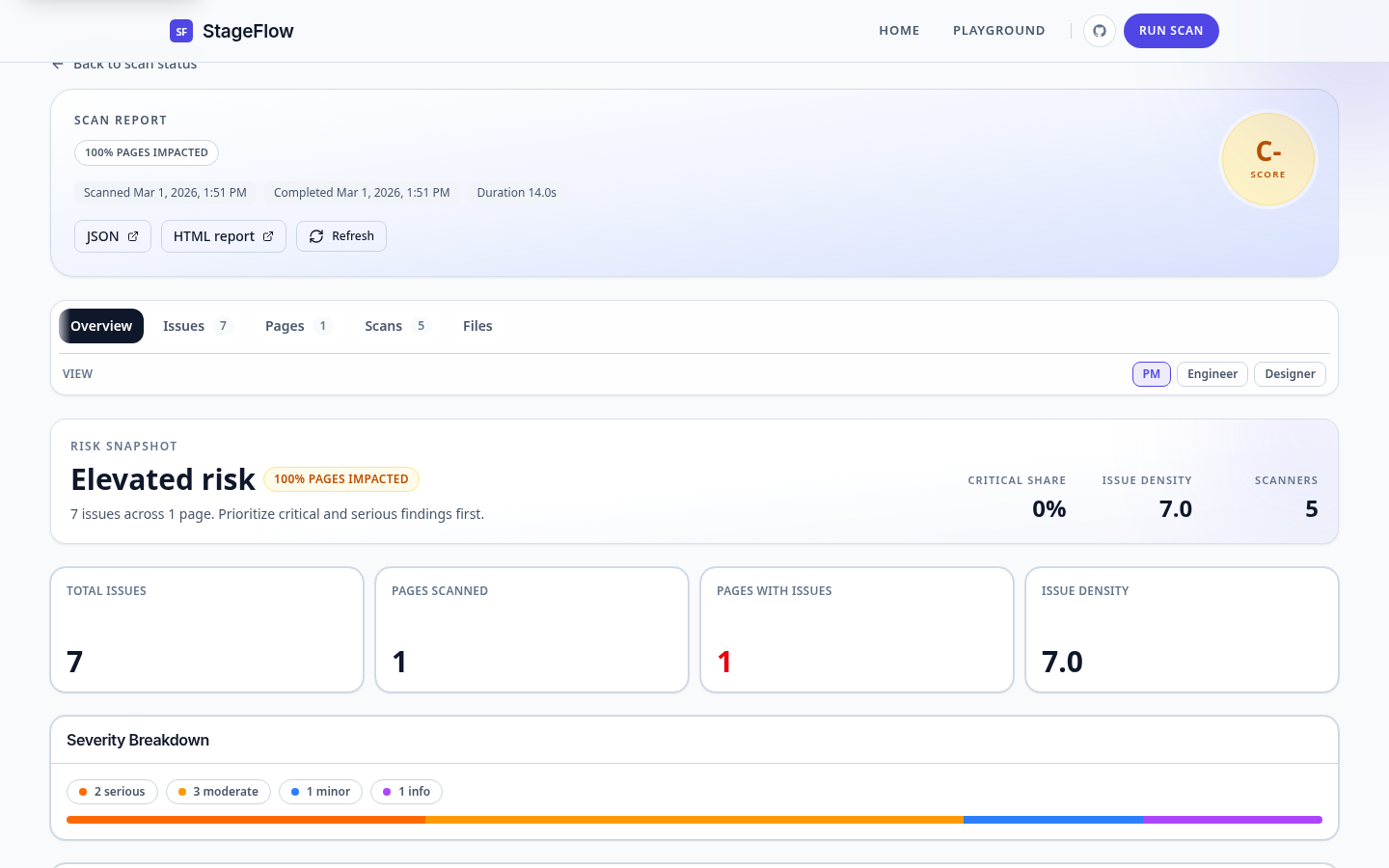

I built a self-hosted scan workbench that runs eight scanners against live URLs or ZIP builds, streams job state over SSE, fingerprints each issue with a 12-character SHA-256 hash, and merges deduplicated findings into one report with page-level evidence.

8

Built-In Scanners

3

User Phases

8

Durable Consumers

3

Audience Modes

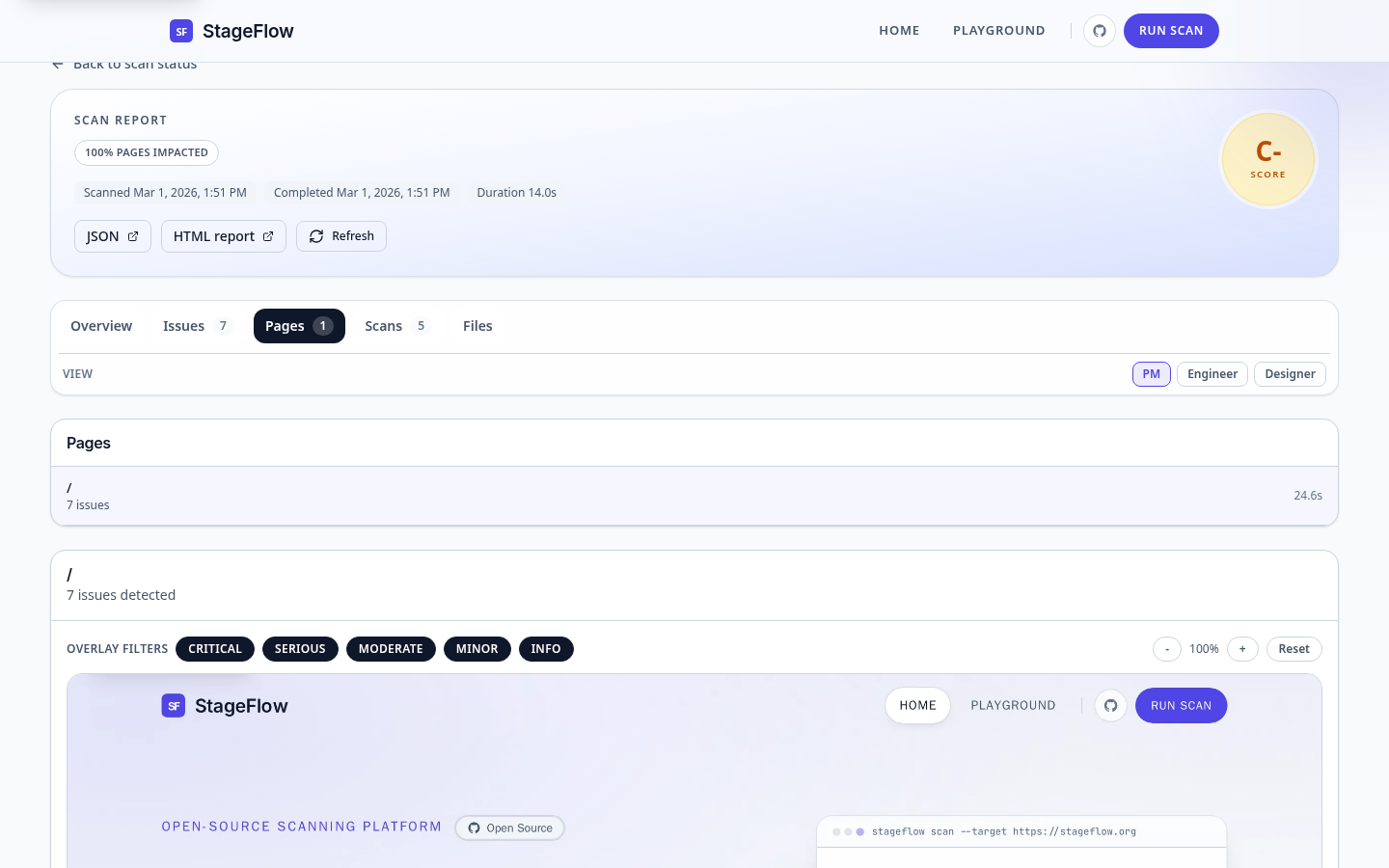

Product Proof

Screens that show the system in context.

Overview

What the product does and why I built it that way.

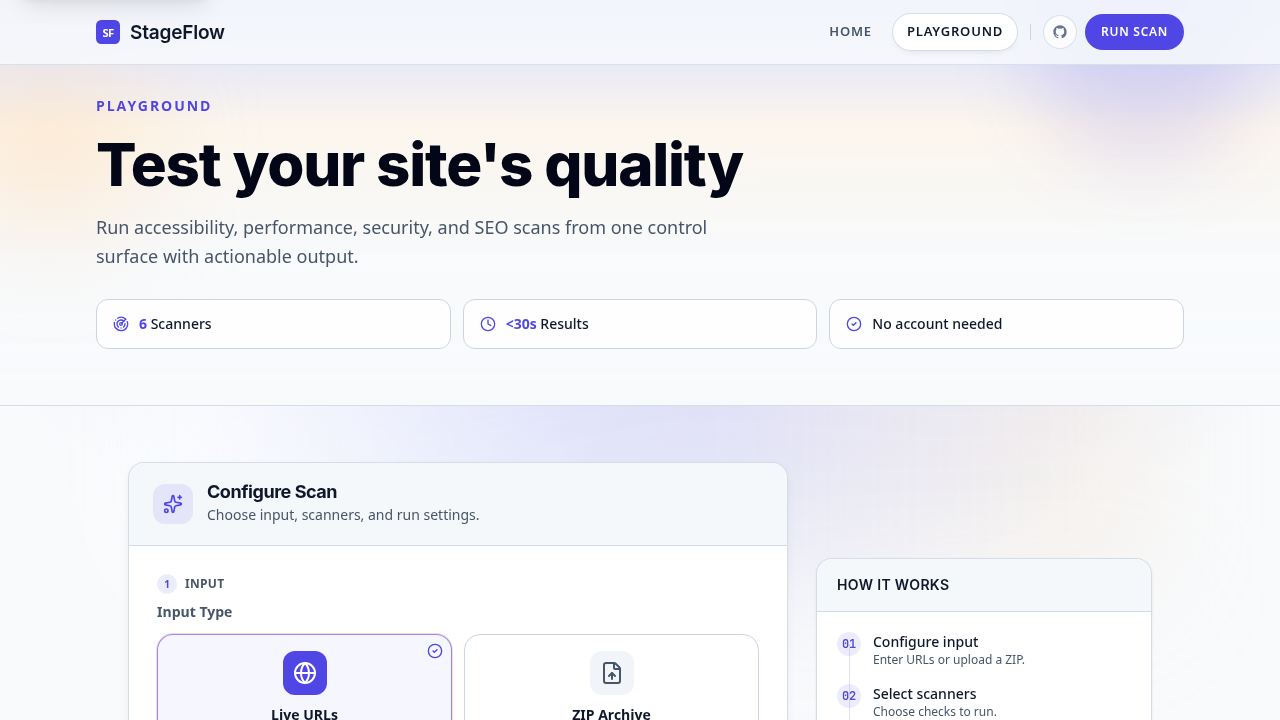

I built StageFlow to give teams one place to configure scans, watch execution, and triage findings without sending data to third-party SaaS. The product accepts live URLs and ZIP-based static builds through the same playground, runs up to eight scanners in rootless Podman pods, and streams job progress over SSE.

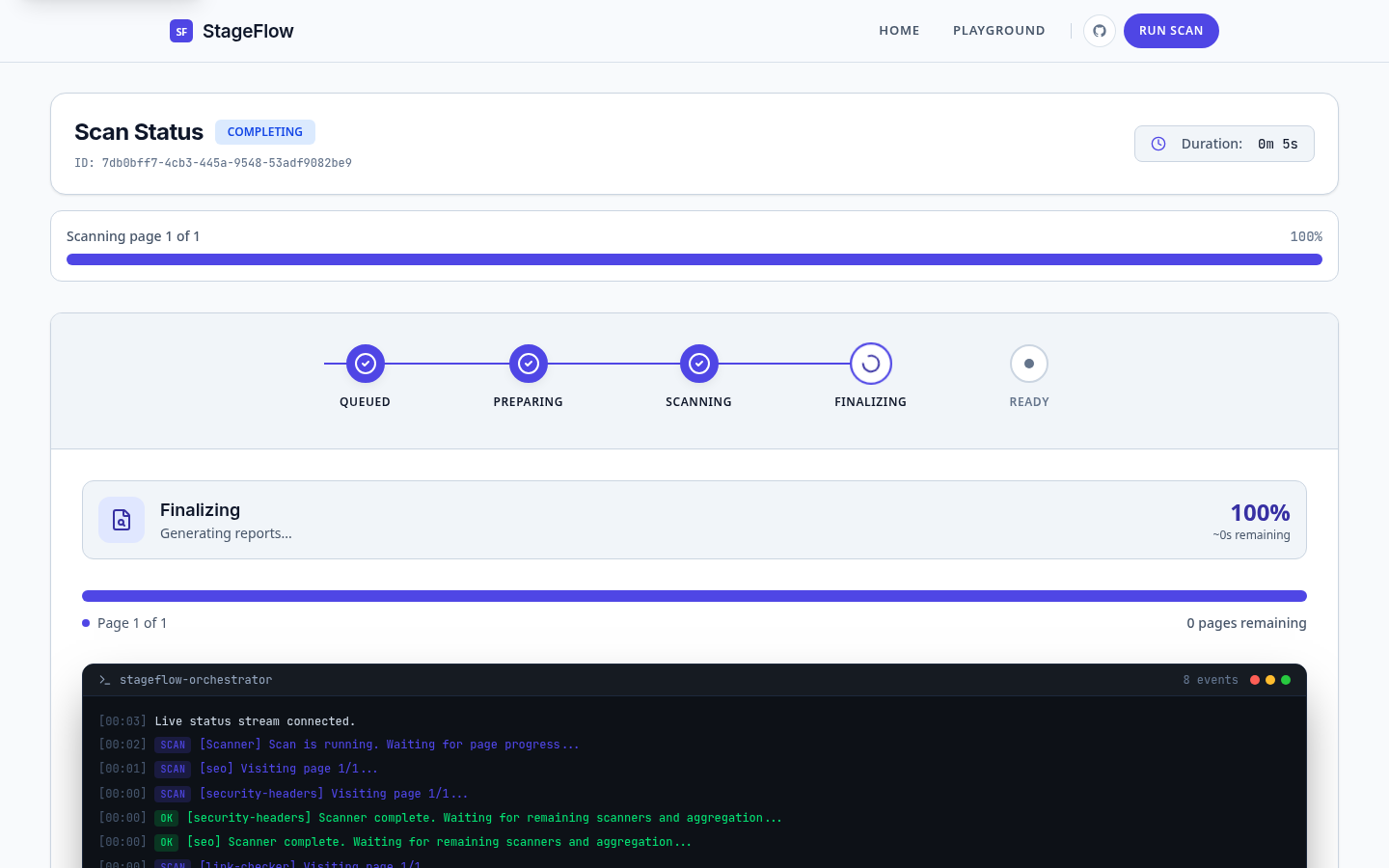

The platform API blocks SSRF attempts across 17 CIDR ranges (covering cloud metadata at 169.254.169.254, RFC1918, link-local, multicast, and CGNAT) before publishing to NATS. The orchestrator manages a seven-state FSM in PostgreSQL, launches per-job pods through the Podman Libpod HTTP API, and monitors each scanner with a dedicated goroutine on WaitContainer. A 30-second deadline sweeper catches jobs stuck in EXTRACTING (5-minute limit) or SCANNING (30-minute limit). When all scanners have reported, results are downloaded from MinIO, deduplicated with a conservative rule equivalence table, and uploaded as a UnifiedReportV2.

Architecture

The system shape behind the product.

Single-host event-driven architecture with strict data ownership boundaries. The platform API validates intake and maintains status projections in memory. JetStream carries lifecycle events across three streams with 72-hour retention. The orchestrator owns state transitions, job pods, and report assembly in PostgreSQL. MinIO stores artifacts and reports. Each service owns its data: the orchestrator never reads SQLite, the platform API never reads PostgreSQL, and neither service accesses the other’s MinIO namespaces.

Ingress

Ingress

Caddy reverse proxy

TLS termination

Path-based routing

Frontend

Frontend

SvelteKit 2 with Svelte 5 Runes

Playground UI

SSE status + report views

Platform API

Platform API

URL / ZIP intake with SSRF validation (17 CIDR ranges)

In-memory status projection (15-min TTL)

SQLite project and baseline storage

Durable State

Durable State

NATS JetStream (3 streams, 8 consumers, 72h retention)

PostgreSQL job state, events, and scanner results

MinIO artifact and report storage

Orchestrator

Orchestrator

Seven-state FSM with idempotent transitions

Scanner dispatch and completion tracking

Report aggregation with cross-scanner deduplication

Execution

Execution

Rootless Podman job pods via Libpod HTTP API

Archive extractor container (ZIP jobs)

Up to 8 scanner runner containers in parallel

Next Step

Inspect the implementation

Review intake validation, orchestrator state transitions, scanner manifests, report aggregation, and the CLI project mode in the StageFlow repository.