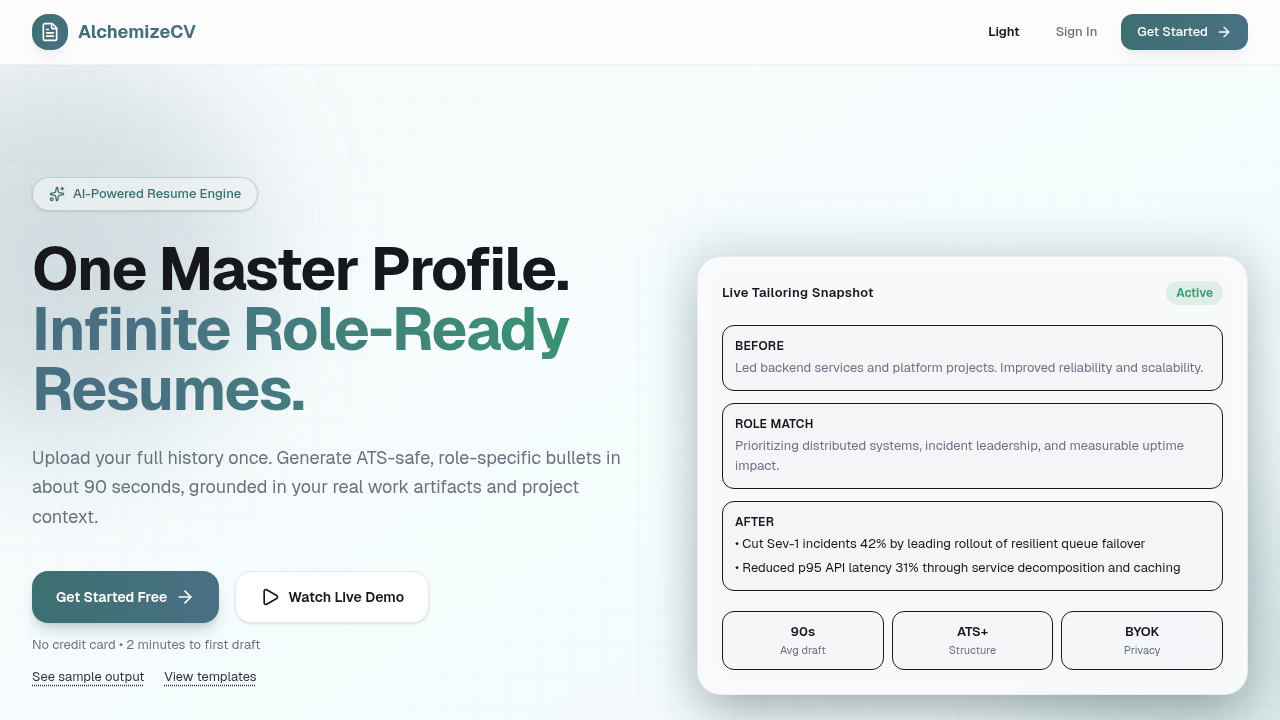

Case Study

AlchemizeCV

Job-search workflow platform that turns a master profile into tailored resumes, grounded project bullets, and tracked applications.

Built a job-search workflow platform that turns one master profile into tailored resumes, grounded project bullets, and tracked application runs through a replayable four-phase generation pipeline.

4

Pipeline Phases

3

Job-Hunt Surfaces

<1s

Render Time

2

Provider Paths

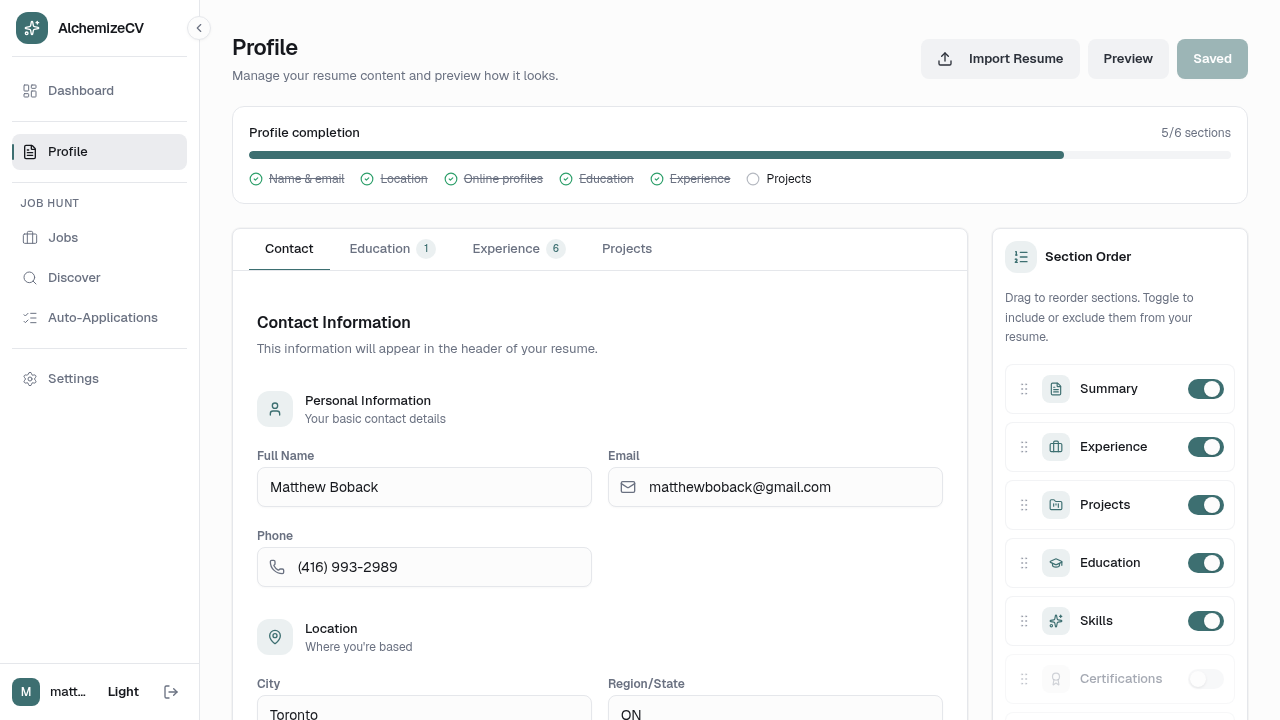

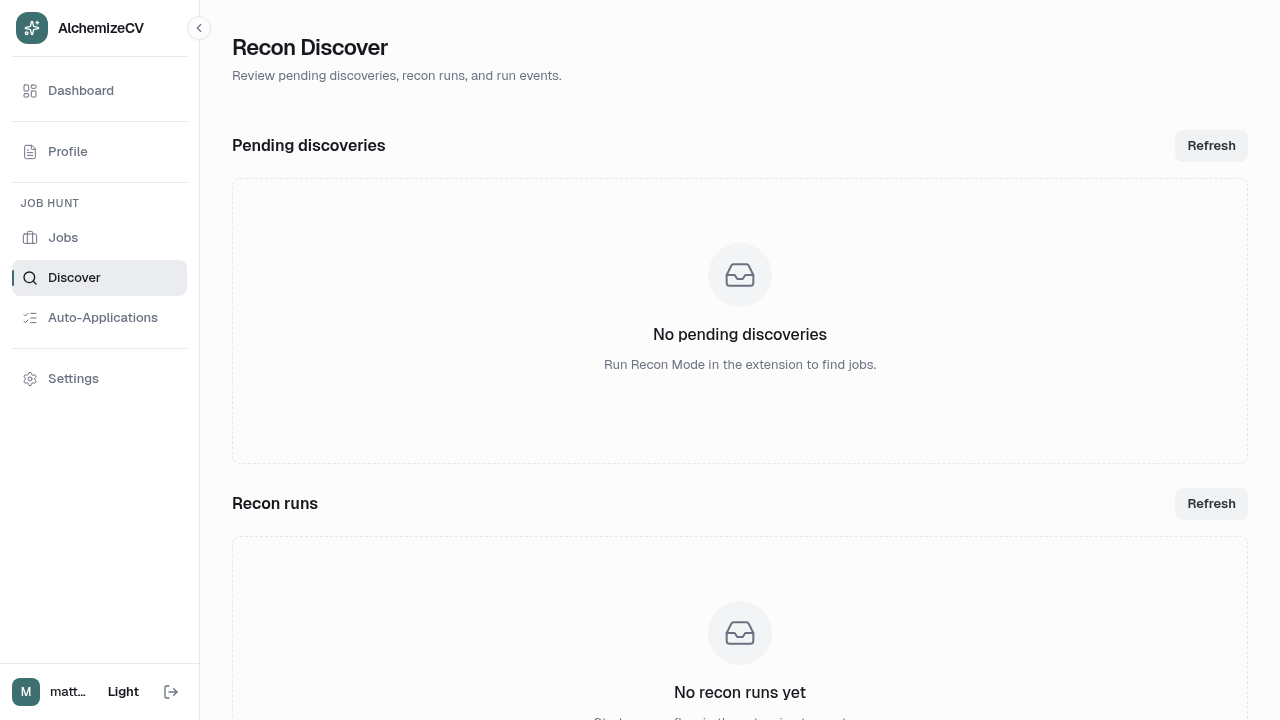

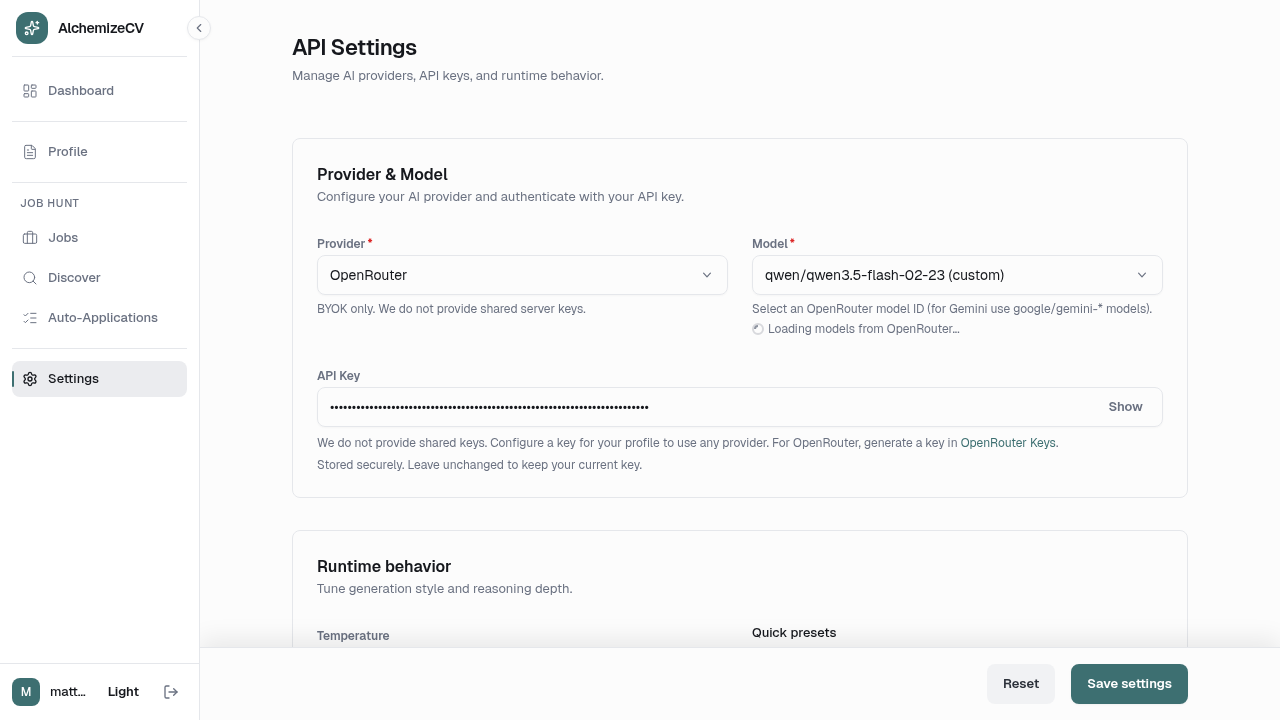

Product Proof

Screens that show the system in context.

Overview

What the product does and why I built it that way.

I built AlchemizeCV because resume tailoring is only one piece of a bigger problem: people need a reusable profile, grounded project context, clear privacy boundaries, and a workflow that survives dozens of applications. The product manages this through a replayable four-phase pipeline whose core innovation is Context-Aware Pruning: as the LLM selects bullets for your most recent job, it passes that selection state forward, ensuring it never repeats the same capability for an older role.

Under the hood, the web app is a React 18 + Vike thin client, FastAPI owns the workflow with content-hashed artifact caching, a Go service uses tree-sitter for deterministic repository analysis and entropy-based secret redaction, and a warm Playwright browser pool delivers sub-second PDF rendering.

Architecture

The system shape behind the product.

Polyglot workflow platform with a React 18 + Vike web app, FastAPI feature slices for profile/jobs/settings/applications, a Go tree-sitter analysis service for GitHub project context, and PostgreSQL-backed persistence for users, jobs, runs, and artifacts.

Ingress

Ingress

Caddy reverse proxy

HTTPS/TLS

Cookie + token boundaries

Web App

Web App

React 18

Vike routing

TypeScript UI + live preview

Resume API

Resume API

FastAPI feature slices

WebSocket progress

Profile / jobs / settings flows

Generation Pipeline

Generation Pipeline

Raw extraction

Synthesis

Context-aware pruning

Assembly + rendering

Code Context

Code Context

Go portfolio service

tree-sitter parser pool

Semantic digest artifacts

Data

Data

PostgreSQL 16

Run lineage + prompts

Rendered artifacts + settings

Next Step

Explore the code

This case study focuses on the onboarding-to-application workflow, the replayable generation pipeline, the GitHub context model, and the polyglot services behind AlchemizeCV.